Session 10: Seeing to it that and Obligations

(Update: There was unfortunately a mistake in the definition of optimal choices. This has been corrected now. [2019-07-01 Mon 22:31])

After having covered many of the essential aspects of modelling actions and choices of individual agents and groups of agents, we will now finally come to our central topic: normative reasoning. How can we, for instance, express that an agent ought to study?

Ought to be

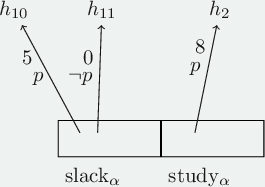

An idea that was successfully applied in the context of Standard Deontic Logic (SDL) was the idea to use the notion of deontically ideal worlds. In our case, it seems more fitting though to speak if ideal histories instead, since the choices of our agents narrow down the bundle of histories \(H_m\) available at a given moment \(m\). So, we could single out a (non-empty) subset \(\mathsf{Ought}_m\) of \(H_m\) containing histories that conform to the given deontic standards. Adding \(\mathsf{Ought}_m\) to our stit frames we end up with deontic stit frames as tuples \(\langle \mathsf{Moments}, <, \mathsf{Agents}, \mathsf{Choice}, \mathsf{Ought} \rangle\).

Horty proposes, for instance, a consequentialist approach in which each history \(h\) comes with a number \(\mathsf{u}(h)\) representing its utility (think of the usual candidates that contribute to a moral notion of utility such as the amount of happiness, the amount of suffering, etc.). One can then define \(\mathsf{Ought}_m\) as those histories in \(H_m\) that come with maximal utility.1 The examples in this blog post will be phrased in this utilitarist spirit. Let’s look at one

In this example the ideal history at our moment \(m\) is \(h_2\) since it has the highest utility. (Note that an ideal history need not be unique, but there will always be one at each given moment.)

Now, once we have singled out our ideal histories, we can define what ought to be just as in SDL:2

- \(m/h \models \mathsf{O} A\) iff for all \(h^{\prime} \in \mathsf{Ought}_m\) we have \(m/h^{\prime} \models A\).

- \(m/h \models \mathsf{P}A\) iff \(m/h \models \neg \mathsf{O} \neg A\).

So, in our example we have:

- \(m/h_i \models \mathsf{O}p\) for any \(i \in \{ {10}, {11}, 2 \}\).

It ought to be that the exam is passed.

Given the similarity to SDL, it should not surprise you that we get similar logical properties, such as:

- If \(\mathsf{O}A\) and \(\mathsf{O}B\) then \(\mathsf{O}(A \wedge B)\).

- \(O(A \rightarrow B)\) implies \(\mathsf{O} A \rightarrow \mathsf{O}B\).

- \(\mathsf{O}A\) implies \(\mathsf{P}A\).

Since we have a necessity operator \(\Box\) in the context of stit-logic, we also get:

- If \(\Box A\) then \(\mathsf{O}A\).

- \(\mathsf{O} A \rightarrow \Diamond A\).

The \(\mathsf{O}\) operator as defined above is stronger than the one of SDL. E.g., we also get, for instance,

- \(\mathsf{O}(\mathsf{O}A \rightarrow A)\)

- \(\mathsf{O} \mathsf{O}A \leftrightarrow \mathsf{O}A\) and

- \(\neg \mathsf{O} A\) implies \(\mathsf{O} \neg \mathsf{O} A\).

Exercise. Show that the latter three properties hold.

- Assume for a contradiction that \(m/h \not\models \mathsf{O} ( \mathsf{O} A \rightarrow A)\).

- Thus, there is a \(h^{\prime} \in \mathsf{Ought}_m\) for which \(m/h^{\prime} \not\models (\mathsf{O} A \rightarrow A)\).

- Hence, (a) \(m/h^{\prime} \models \mathsf{O} A\) and (b) \(m/h^{\prime} \not\models A\).

- By (a), \(m/h^{\prime} \models A\) since \(h^{\prime} \in \mathsf{Ought}_m\). This contradicts (b).

- We show both directions separately.

- (\(\rightarrow\)). Suppose \(m/h \models \mathsf{O}\mathsf{O} A\).

- Thus, for all \(h^{\prime} \in \mathsf{Ought}_m\), \(m/h^{\prime} \models \mathsf{O}A\).

- Thus, for all \(h^{\prime} \in \mathsf{Ought}_m\), \(m/h^{\prime} \models A\).

- Thus, \(m/h \models \mathsf{O} A\).

- (\(\leftarrow\)). We show the contrapositon. Suppose \(m/h \not\models \mathsf{O}\mathsf{O} A\).

- Thus, there is a \(h^{\prime} \in \mathsf{Ought}_m\) for which \(m/h^{\prime} \not\models \mathsf{O} A\).

- Thus, there is a \(h^{\prime\prime} \in \mathsf{Ought}_m\) for which \(m/h^{\prime\prime} \not\models A\).

- Thus, \(m/h \not\models \mathsf{O}A\).

- (\(\rightarrow\)). Suppose \(m/h \models \mathsf{O}\mathsf{O} A\).

- We show the contraposition. Suppose \(m/h \mathop{\not\models} \mathsf{O} \neg \mathsf{O} A\).

- Thus, there is a \(h^{\prime} \in \mathsf{Ought}_m\) for which \(m/h^{\prime} \mathop{\not\models} \neg \mathsf{O}A\) and hence \(m/h^{\prime} \models \mathsf{O} A\).

- Thus, for all \(h^{\prime\prime} \in \mathsf{Ought}_m\), \(m/h^{\prime\prime} \models A\).

- Thus, \(m/h \models \mathsf{O} A\) and thus also \(m/h \mathop{\not\models}\neg \mathsf{O}A\).

So far we have not really talked about agentive obligations like “You ought to study.” But maybe we have all necessary tools already in our toolbox. The idea is to express

- “Anne ought to see to it that \(A\).” as

- “It ought to be that Anne sees to it that \(A\).”

The latter can be expressed by \(\mathsf{O} [ \mathsf{Anne} ~\mathsf{cstit{:}}~ A]\).3

In our example, we have:

- \(m/h_i \models \mathsf{O} [\alpha ~\mathsf{cstit{:}}~ p]\).

Our agent ought to see to it that she passes the exam.

Recall, that one of the core problems of SDL was reasoning about contrary-to-duty (CTD) obligations. In Horty (2001) we find the following example demonstrating that in the deontic stit framework one can deal with CTD-obligations, at least when they have a temporal dimension.4 Consider the situation where

- Anne has to fly to her aunt.

- If she, however, doesn’t come, she better call her.

A stit model of the situtation may, for instance, look as follows:

Some observations:

- At the moment \(m\) the ideal history is \(h_3\) with utility 8.

- At the moment \(m_1\) the ideal history is \(h_{21}\), where she calls her aunt. Clearly, in this sub-ideal scenario, calling her aunt is the best thing to do.

Note also that, were we to use only discreet distinctions into ideal and non-ideal worlds (e.g., via assignments of os and 1s to histories), we would have to make our assignments in a moment-relative way. However, if we allow for utilities more broadly conceived we can make our assignments globally, ie., context-independent and still model CTD cases like the one above.

Horty presents several potential objections against modelling ought-to-dos in terms of ought-to-bes.

The first is the worry that it may conflate two types of interpretations of obligations. Take as an example the sentence “Albert ought to win the medal.”

- Interpretation 1 (Situational): He trained so very hard and it has been remarked that already last year he should have won it. He just deserves it.

- Interpretation 2 (Agent-implicating): Albert better trains hard, he ought to win the medal for his team.

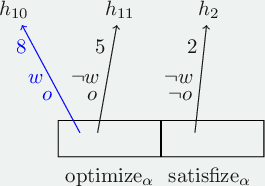

However, the deontic stit framework can distiguish these notions with ease. Where \(w\) is the statement “Albert wins the medal.” we can distinguish \(\mathsf{O} w\) from \(\mathsf{O} [\alpha ~\mathsf{cstit{:}}~ w]\). In order to see that these two formulations are not equivalent in the deontic stit framework consider:

Here it is not in the power of agent \(\alpha\) (Albert) to win the medal, but he can optimize his performance and enforce an outcome of at least 5 utility (he doesn’t win, but performs well) or maximal 8 utility (he wins). If he only satisfizes he will end up with utility 2. We have for \(h \in \{ h_{10}, h_{11}, h_2 \}\)

- \(m/h \models \mathsf{O} w\)

- \(m/h\models \neg \mathsf{O} [\alpha ~\mathsf{cstit{:}}~ w]\)

- \(m/h \models \mathsf{O} [\alpha ~\mathsf{cstit{:}}~ o]\)

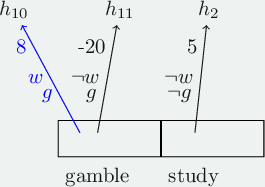

We now come to a deeper problem: the gambling problem. Consider the scenario, where \(g\) stands for “gambling” and \(w\) for “winning”:

We have, for instance, the following:

- \(m/h_i \models \mathsf{O}w\).

- \(m/h_i \models \mathsf{O}g\).

- \(m/h_i \models \mathsf{O} [\alpha ~\mathsf{cstit{:}}~ g]\)

- \(m/h_{i} \models \mathsf{O} [\alpha ~\mathsf{cstit{:}} \neg [\alpha ~\mathsf{cstit{:}}~ w]]\) and

- \(m/h_i \models \mathsf{O} \neg [\alpha ~\mathsf{cstit{:}}~ w]\).

Exercise: Check for yourself, why these statements hold and whether you find these intuitive.

It seems questionable to demand from her to see to it to gamble (item 3) given the significant disutility she risks by this choice.5

Towards Ought to do: sure-thing reasoning

A more cautious approach to the modelling of obligations would be to require that the obliged action dominates its alternatives in the sense that every history in it is at least as good as every history in an alternative choice of action. In what follows, we will refine this idea some more. Following this strategy it is useful to compare propositions \(|A|\) and \(|B|\) by:6

- \(|A| \le |B|\) ("\(|B|\) is weakly preferred over \(|A|\)") iff for all \(h_a \in |A|\) and for all \(h_b \in |B|\) we have \(\mathsf{u}(h_a) \le \mathsf{u}(h_b)\).

- \(|A| < |B|\) ("\(|B|\) is stricly preferred over \(|A|\)") iff \(|A| \le |B|\) and not \(|B| \le |A|\).

For instance, in our gambling scenario above gambling is not weakly preferred over not gambling since \(\mathsf{u}(h_{11}) = -20 < \mathsf{u}( h_{2}) = 5\). So, the new and more cautious approach would avoid the obligation to gamble.

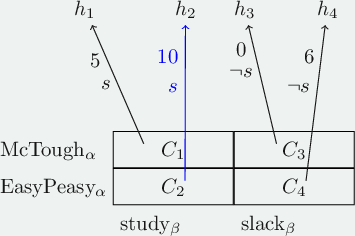

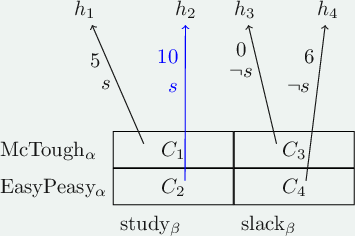

Our approach may be a tad too cautious, though. Consider the following example. We have two agents, \(\beta\) our student, and \(\alpha\) a person working in the course administration of the university. \(\beta\) has two choices, to study for the exam or to slack. \(\alpha\) decides on who will be the examiner:

- Prof. McTough, known for very hard exams. Good marks are not easy to get.

- Prof. EasyPeasy, known for her simple exams.

We get the following stit-scenario:

Note that \(s\) is not weakly preferred over \(\neg s\). Nevertheless, a simple reasoning process seems to recommend itself in situations like this. It goes as follows:

- If McTough is the examiner then studying is better than slacking (since \(\mathsf{u}(h_1) > \mathsf{u}(h_3)\)).

- If EasyPeasy is the examiner then studying is better than slacking (since \(\mathsf{u}(h_2) > \mathsf{u}(h_4)\)).

- Thus, studying is better than slacking.

This kind of “sure-thing” or “(case-based) dominance” reasoning is a form of reasoning by cases. The reasoning task is to decide among actions \(K_1, \dotsc, K_n\). For this we make an exhaustive grouping of possible outcomes (our “cases”): \(C_1, \dotsc, C_m\). Any choice \(K_i\) for which there is no \(K_l\) that is strictly preferred over \(K_i\) relative to some case \(C_j\), is a permissible choice for our agent.

In our example the two cases stem from the possible choices of our other agent \(\alpha\), the course administrator: to choose McTough or to choose EasyPeasy as the examiner. Recall that the choices of different agents are independent. This is indeed quite important for sure-thing reasoning to succeed. We will in the next sub-section investigate this a bit further by taking a look at the Newcomb puzzle.

However, before doing so we show how to give an alternative account of “ought-to-do” based on the sure-thing principle, where the cases in the underlying case distinction originate from the choices made by other agents.

We first illustrate this in the context of 2 agents. Suppose \(\alpha\) decides about the choices \(K_1, \dotsc, K_n\) at moment \(m\). The other agent, \(\beta\), has the choices \(K_1^{\prime}, \dotsc, K_l^{\prime}\).

- We say that \(K_i\) weakly dominates \(K_j\) (where \(1 \le i,j \le n\)) iff for every choice \(K_j^{\prime}\) of \(\beta\), \(K_j^{\prime} \cap K_i\) is weakly preferable to \(K_j^{\prime} \cap K_j\).

- We say that \(K_i\) strongly dominates \(K_j\) (where \(1 \le i,j \le n\)) iff it weakly dominates \(K_j\) and \(K_j\) does not weakly dominate \(K_i\).

- \(K_i\) is an optimal choice for \(\alpha\) iff it is not strongly dominated by any alternative choice \(K_j\) of \(\alpha\).

We then define

- \(m/h \models \odot [\alpha ~\mathsf{cstit{:}}~ A]\) iff for all optimal choices \(K_i\) of \(\alpha\) at \(m\), \(K \subseteq |A|\).

Before we generalize this to the case of \(n\) many agents, let us take a look at an example.

In our scenario we have for all histories \(h \in \{ h_1, \dotsc, h_4 \}\),

- \(m/h \models \odot [\beta ~\mathsf{cstit{:}}~ s]\).

Exercise. Consider the following scenario where \(g\) stands for “great course”.

What kind of \(\odot\)-obligations do the student and the professor have?

Some observations.

- \(\mathsf{Ought}_m = \{ h_2 \}\).

- The optimal choices of \(\mathsf{stu}\) is \(\mathtt{study} = C_2 \cup C_4 = \{ h_2, h_{41}, h_{42} \}\).

- First, we show that \(\mathtt{study}\) is weakly preferable to \(\mathtt{slack}\) for \(\mathsf{stu}\).

- \(\mathtt{study}_{\mathsf{stu}} \cap \mathtt{prep}_{\mathsf{prof}}= C_2\) is weakly (even strictly) preferable to \(\mathtt{slack}_{\mathsf{stu}} \cap \mathtt{prep}_{\mathsf{prof}} = C_1\).

- \(\mathtt{study}_{\mathsf{stu}} \cap \mathtt{research}_{\mathsf{prof}}= C_4\) is weakly (even strictly) preferable to \(\mathtt{slack}_{\mathsf{stu}} \cap \mathtt{research}_{\mathsf{prof}} = C_3\).

- Now we show that \(\mathtt{study}\) is strictly preferable to \(\mathtt{slack}\) and so \(\mathtt{slack}\) is not an optimal choice for \(\mathsf{stu}\).

- \(\mathtt{slack}_{\mathsf{stu}} \cap \mathtt{prep}_{\mathsf{prof}} = C_1\) is not weakly preferable to \(\mathtt{study}_{\mathsf{stu}} \cap \mathtt{prep}_{\mathsf{prof}}= C_2\).

- Note the \(m/h \models \neg \odot [ \mathsf{stu} ~\mathsf{cstit{:}}~ g]\). The reason is that \(m/h_{41} \models \neg g\) and \(h_{41} \in \mathtt{study}\).

- Similarly, \(\mathtt{prep}\) is the optimal choice for our professor. (Check this for yourself).

- Nevertheless, \(m/h \models \neg \odot [ \mathsf{prof} ~\mathsf{cstit{:}}~ g]\) since again \(m/h_{11} \models \neg g\) and \(h_{11} \in \mathtt{prep}\).

- Finally, the optimal choice for the group \(\{ \mathsf{stu}, \mathsf{prof} \}\) is \(C_2\). (See below for the definitions). To see this, notice that it strictly dominates all other choices of \(\{ \mathsf{stu}, \mathsf{prof} \}\).

- As a consequence, \(m/h \models \odot [ \{ \mathsf{stu}, \mathsf{prof}\} ~\mathsf{cstit{:}}~ g]\).

Notice that there are ways to fulfill the individual obligations while violating the group obligation. (E.g., if both invite A.)

Exercise. Rick and Morty are organizing a party. It would be good if A and B get invited. We have the following table (each number represents one history with the respective utility):

Rick \ Morty invite A invite B slack invite A 2 10 1 invite B 10 2 1 slack 1 1 0 What \(\odot\)-obligations do Rick and Morty have?

Some observations.

- The optimal choices for Rick are \(\mathtt{invite A}\) amd \(\mathtt{invite B}\). Note that these two choices strongly dominate \(\mathtt{slack}\), but neither weakly/strongly dominates the other.

- Similar for Morty.

- So both have the obligation to (invite A or invite B).

- Neither has the obligations to invite A and neither has the obligation to invite B.

- Let us now consider the group consisting of Rick and Morty. (See below for the definitions.)

- We have two optimal choices:

- The one where Rick invites A and Morty invites B.

- The one where Rick invites B and Morty invites A.

- So, as a group they have the obligation to invite A and B.

Let us generalize the approach to the context of multiple agents, where \(\emptyset \subset \mathcal{G} \subseteq \mathsf{Agents}\) and \(\mathsf{Agents} \setminus \mathcal{G} = \{ \beta_1, \dotsc, \beta_k \}\):

- We say that \(K \in \mathsf{choice}_{\mathcal{G}}^m\) weakly dominates \(K^{\prime} \in \mathsf{choice}_{\mathcal{G}}^m\) iff for every choice \(K_1 \in \mathsf{choice}_{\beta_1}^m\), …, \(K_k \in \mathsf{choice}_{\beta_k}^m\), \(K \cap K_1 \cap \cdots \cap K_k\) is weakly preferable to \(K^{\prime} \cap K_1 \cap \cdots \cap K_k\).

- We say that \(K\) strongly dominates \(K^{\prime}\) iff it weakly dominates \(K^{\prime}\) and \(K^{\prime}\) does not weakly dominate \(K\).

- \(K \in \mathsf{choice}_{\mathcal{G}}^m\) is an optimal choice for \(\mathcal{G}\) iff it is not strongly dominated by any alternative choice \(K^{\prime} \in \mathsf{choice}_{\mathcal{G}}^m\).

- Finally, \(m/h \models \odot [\mathcal{G} ~\mathsf{cstit{:}}~A]\) iff for all optimal choices \(K\) of \(\mathcal{G}\) at \(m\), \(K \subseteq |A|\).

Exercise. Rick and Morty are going to a party. To satisfy the host, it’s better to bring something along. Read the following table as in the previous exercise and determine individual and group obligations.

Rick \ Morty wine beer nothing wine 1 1 1 snacks 1 1 0 nothing 1 0 0

Some observations.

- The optimal choice for Rick is to bring wine. It strictly dominates the choice to bring snacks and to do nothing.

- The optimal choice for Morty is also to bring wine. It strictly dominates the choice to bring beer and to do nothing.

- Thus, \(m/h \models \odot [ \mathtt{Rick} ~\mathsf{cstit{:}}~ \mathtt{beer}]\) and \(m/h \models \odot [ \mathtt{Morty} ~\mathsf{cstit{:}}~ \mathtt{beer}]\).

- The optimal choices for the group are:

- At least one of them brings wine.

- Bring snacks and beer.

- Thus, \(m/h \models \odot [ \{ \mathtt{Rick}, \mathtt{Morty}\} ~\mathsf{cstit{:}}~ \mathtt{wine} \vee ( \mathtt{snacks} \wedge \mathtt{beer})]\).

Notice that there is a way to fulfill the group obligation which violates both individual obligations.

Newcomb’s Puzzle

A well-known puzzle, Newcomb’s problem, illustrates that things can easily get tricky where the sure-thing principle is involved.7

Suppose a super-intelligent being \(\Omega\) offers you two boxes:

- Box A is closed and \(\Omega\) claims there may or may not be 1.000.000€ inside.

- Box B is open with 1.000€ inside.

\(\Omega\) tells you that you have two options:

- You play the 1-box game and take only Box A (the closed one). Whatever is inside is yours.

- You play the 2-box game and take both boxes. Whatever is inside is yours.

Which game do you play?

… OK, sure. But here’s the catch: \(\Omega\) is an extremely good in predicting your choices. In fact she has an 90% accuracy rate! She doesn’t tell you what choice she predicted for this game, but what she tells you is the following:

- If I predicted that you play 1-box, I put 1.000.000€ in box A.

- If I predicted that you play 2-box, I put nothing in box A.

Which game do you play?

Let us analyze the situation. First we use the sure-thing principle and reason as follows:

| money inside | 1.000.000€ | 1.001.000€ |

| empty | 0€ | 1.000€ |

| play 1-box | play 2-box |

We have two cases, (a) the money is in box 1, or (b) box 1 is empty. In each case playing 2-box is strictly preferable to playing 1-box. So, with the sure-thing-principle, we play 2-Box.

Another reasoning strategy is to consider expected utilities. The basic idea here is that expected utility of an action is the sum of the utility of the possible outcomes weighted by their probability (conditional on the given choice). This is best understood when applied to the example.

- We first consider the expected utility of playing 1-box. It is given by: \[ EU(\mathtt{1box}) = 0.9 \cdot u(1.000.000) + 0.1 \cdot u(0) \] The two possible outcomes of playing 1-box are that either 1.000.000€ are in box 1 or they are not. The former has probability 0.9. The reason is that the money is only in box 1 if \(\Omega\) correctly predicted that I play 1-box and her accuracy is 90%. The other case has therefore probability 0.1.

- As for playing 2-box we have: \[ EU(\mathtt{2box}) = 0.1 \cdot u(1.001.000) + 0.9 \cdot u(1.000) \]

So, if we equate the utility with the amount of money we get, it is easy to see that playing 1-box is vastly superior to playing 2-box.

Which reasoning process to trust?

Without trying to resolve the puzzle, I want to at least warn of applications of sure-thing reasoning when some of the given cases are probabilistically or causally dependent on the choices. E.g., in our case, the higher the probability that I play 1-box, the higher the probability that there is money in box 1. To see the problem maybe more clearly we consider another example by Horty.

We have a student who is wondering whether to study for the exam or to slack. The possible outcomes are listed in the table:

With the sure-thing principle we can argue with the case distinction:

- If I fail, I am better off slacking (since \(\mathsf{u}(h_{11}) = 2 > \mathsf{u}(h_{22}) = 0\)).

- If I pass, I am better off slacking (since \(\mathsf{u}(h_{10}) = 6 > \mathsf{u}(h_{22}) = 0\)).

Thus, I should slack.

The problem, however, is that there is a dependency between studying and the likelihood of passing the exam which is entirely ignored in the upper application of the sure-thing principle.8

Indeed, if we reason with expected utility we have a quite different picture (with some made up probabilities):

- \(EU(\mathtt{study}) = 0.8 \cdot 4 + 0.2 \cdot 0 = 3.2\) vs.

- \(EU(\mathtt{slack}) = 0.1 \cdot 6 + 0.9 \cdot 2 = 2.4\)

Exercise. Check what happens if the likelyhood of passing giving studying is less high.

-

As long as we have finitely many histories, \(\mathsf{Ought}_m\) will be non-empty. Else, one may need to proceed slightly different from the way presented in this blog post. For details see Horty’s (2001) “Agency and Deontic Logic”, Oxford University Press which I highly recommend for further details on the topics presented here. ↩︎

-

As in previous entries on stit-logic I often reduce notational clutter by omitting the implicitly given model \(M\). So instead of \(M, m/h \models A\) I often write \(m/h \models A\). ↩︎

-

Horty refers to this proposal as the Meining/Chisholm approach. For more discussion on the philosophical background of this approach which aims to express “ought to do” in terms of “ought to be” you can found in Horty’s book. ↩︎

-

For non-temporal CTD-cases see Prakken & Sergot, Contrary-to-duty obligations, Studia Logica, 57(1), 91–115 (1996). ↩︎

-

Examples like this one motivate to enrich the stit-framework with probabilities. This has been done, for instance, in Jan Broersen, Probabilistic stit logic and its decomposition, International Journal of Approximate Reasoning, Volume 54, Issue 4, 2013. ↩︎

-

Recall that in the context of a moment \(m\) and a model \(M\) the notation \(|A|_m\) (or simply \(|A|\)) denotes the set \(\{ h \in H_m \mid M,m/h \models A \}\). ↩︎

-

See Nozick, Robert. “Newcomb’s problem and two principles of choice.” Essays in honor of Carl G. Hempel. Springer, Dordrecht, 1969. 114-146. ↩︎

-

What makes the Newcomb problem so much more puzzling and the sure-thing principle so much more appealing is that at the moment we choose which game to play the decision whether the money is in box 1 has already been made, unlike the case where studying has a causal influence on our future performance in the exam. ↩︎